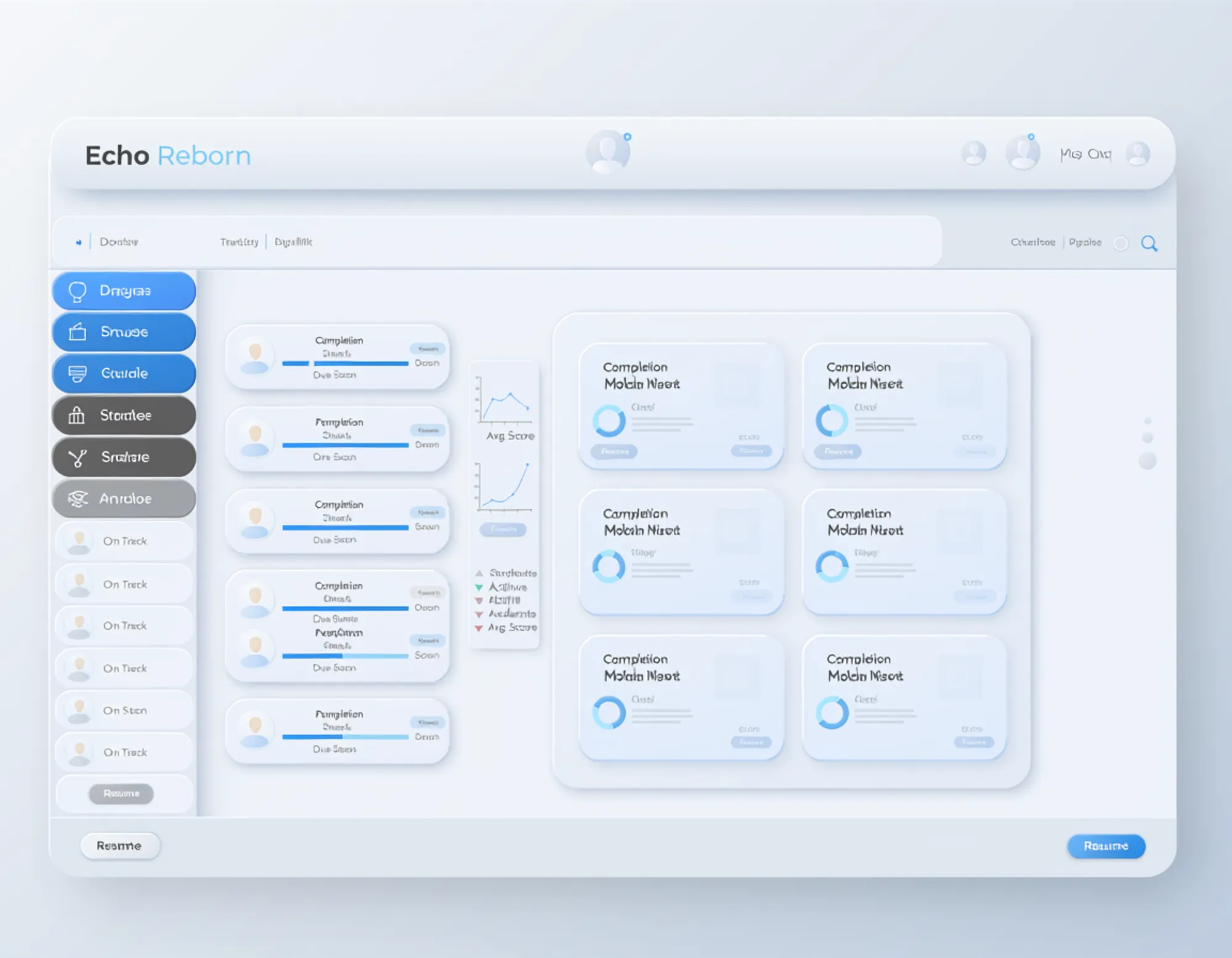

Echo Reborn

In early 2026, Agilix cancelled their contract with New Tech Network — a national K-12 education network spanning 63 schools and 22,000+ students. The LMS those schools depended on was going away, and no commercial replacement could do what they needed.

This is the story of building a full Learning Management System from scratch in approximately 100 days as a zero-revenue gift.

The Problem

The problem wasn’t that NTN needed an LMS. Canvas, Schoology, and Google Classroom exist. The problem was that NTN uses a pedagogical model no commercial LMS supports: multi-outcome assessment.

In a traditional LMS, grading is simple: one student submits one assignment, one teacher gives one score. Student × assignment → single score. Canvas does this. Schoology does this. Every LMS on the market does this.

NTN’s model is fundamentally different. A single student submission gets scored against five independent learning outcomes — Knowledge & Thinking, Written Communication, Oral Communication, Collaboration, and Agency. Each outcome has its own rubric. Each rubric has multiple dimensions (e.g., Claim, Evidence, Reasoning). A teacher scores each dimension independently. The gradebook aggregates by outcome across all activities, not by simple assignment average.

This makes the gradebook four-dimensional:

- Dimension 1: Students (rows)

- Dimension 2: Activities (column groups)

- Dimension 3: Learning outcomes per activity (sub-columns)

- Dimension 4: Rubric dimensions per outcome (drill-down detail)

No commercial LMS supports this. NTN previously tried building their own (~2015, external vendor) but abandoned it due to bugs and cost. They went with Agilix because Buzz supported multi-outcome scoring. When Agilix cancelled, NTN was stranded.

My wife works at NTN. I named the company after our daughter, Casiana. I built Echo Reborn because I could.

Architecture Overview

Monolith, not microservices. One Next.js app, one Supabase database, one deployment. A solo developer maintaining microservices is a recipe for operational overhead that serves nobody.

Server-first rendering. React Server Components for data-heavy views (gradebook, reports). Client Components only for interactivity (rich text editor, assessment UI, real-time updates).

RLS IS the security model. FERPA compliance is enforced at the database level, not the application layer. Every table has Row Level Security policies. Teacher A never sees Teacher B’s students. School A never sees School B’s data. This isn’t an application-level permission check that can be bypassed — it’s a database constraint that applies to every query.

Tech Stack

- Framework: Next.js 16 (App Router, Server Components), React 19

- Language: TypeScript in strict mode — zero

as anycasts across the entire codebase (47 were hardened to 0) - Database: Supabase PostgreSQL with Row Level Security on every table

- UI: Tailwind CSS + shadcn/ui (142 components)

- Editor: Tiptap (rich text content authoring with collaborative editing)

- Auth: Supabase Auth (email/password + SAML SSO for Clever/ClassLink)

- Monitoring: Better Stack (uptime + alerting), Sentry (error tracking)

- Testing: Playwright (761 E2E tests) + Vitest (528 unit tests)

Technical Deep Dive: Multi-Outcome Scoring Schema

The core schema reflects the four-dimensional gradebook model:

-- What learning outcomes exist (network-level, not school-level)learning_construct (id, name, slug, description, organization_id, display_order)

-- Which outcomes a specific activity assessesactivity_construct (id, activity_id, learning_construct_id, rubric_id, points_possible, weight)

-- Teacher's score for one outcome on one submission (THE KEY TABLE)construct_score (id, submission_id, activity_construct_id, score, scored_by, scored_at, feedback, is_self_assessment)

-- Per-dimension breakdown within an outcome scoredimension_score (id, construct_score_id, rubric_dimension_id, level, comment)A single submission generates multiple construct_score rows (one per tagged outcome) and multiple dimension_score rows within each (one per rubric dimension). The gradebook query joins across all four levels to produce the multi-dimensional view teachers need.

Real RLS Policies

FERPA compliance is not a checklist item bolted on at the end — it’s the foundation of the data model. Here’s an actual RLS policy from the codebase that enforces tenant isolation on construct scores:

-- Teachers can only see scores for students in their coursesCREATE POLICY "construct_score_select_policy" ON construct_score FOR SELECT USING ( EXISTS ( SELECT 1 FROM submission s JOIN activity a ON a.id = s.activity_id JOIN course c ON c.id = a.course_id JOIN enrollment e ON e.course_id = c.id WHERE s.id = construct_score.submission_id AND c.organization_id = auth.jwt() ->> 'organization_id' AND ( e.user_id = auth.uid() -- enrolled teacher/student OR EXISTS ( SELECT 1 FROM app_user u WHERE u.id = auth.uid() AND u.organization_id = c.organization_id AND u.role IN ('school_admin', 'network_admin') ) ) ) AND construct_score.deleted_at IS NULL );Every security-sensitive function (grade calculations, trend analysis, course grades) also verifies the caller’s authorization before returning data. The deleted_at IS NULL guard ensures soft-deleted records (required by FERPA for data retention) are filtered from normal queries.

155+ RLS policies across 57 tables. Four security audits caught regressions — including a critical P0 remediation that restored deleted_at guards, revoked excessive anonymous grants, and added auth checks to SECURITY DEFINER grade calculation functions.

FERPA Compliance Architecture

FERPA mandates that education records are protected and access is restricted to authorized personnel only. Echo enforces this through four layers:

- Row Level Security — Database-level tenant isolation. Every query is filtered by

organization_idand user role. No application code can bypass this. - Application Scoping — Server Components fetch data through the authenticated Supabase client. The RLS policy runs on every query automatically.

- E2E Verification — Dedicated Playwright test suites verify that Teacher A cannot see Teacher B’s students, School A cannot see School B’s data.

- Immutable Audit Log — Every PII access is logged with user ID, action, target, timestamp, and IP address. SHA-256 hash chain verification ensures log integrity — tampered entries break the chain.

Soft deletes via deleted_at timestamps ensure FERPA’s data retention requirements are met while keeping records invisible to normal queries.

Results & Metrics

The numbers from the CTO Technical Assessment (scored 9.85/10):

| Metric | Value |

|---|---|

| Lines of Code | 136,301 |

| Files | 918 |

| Pages | 146 |

| API Routes | 78 |

| UI Components | 142 (shadcn/ui) |

| Database Tables | 57 |

| RLS Policies | 155+ |

| Database Migrations | 131 |

| Test Cases | 1,289 (761 E2E + 528 unit) |

| Commits | 550 |

as any Casts | 0 (hardened from 47) |

| Assessment Score | 9.85/10 |

Cost Economics

Echo runs on Supabase (free tier eligible for small deployments) + Vercel (auto-deploy from GitHub). Total infrastructure cost: $65-75/month.

For 22,000+ students, that’s $0.003/student/month.

Agilix charged approximately $1/student/month. Canvas and Schoology price at $4-15/student per year for most institutions, with multi-year contract commitments and implementation fees that can reach tens of thousands.

Echo is 300x cheaper than Agilix per student. The entire platform costs less per month than a single Canvas implementation fee.

This is possible because there are no sales teams, no account managers, no enterprise support tiers, and no profit margin. It’s a gift.

What I Learned

Migration drift will find you. By v8 of the codebase, migration drift had introduced silent schema inconsistencies between local development and production. This became a critical fix — a migration audit that verified every table, column, and constraint matched between the migration chain and the live database. In a solo project with 131 migrations, automation for detecting drift is essential, not optional.

Type safety is non-negotiable at scale. The codebase started with 47 as any casts — shortcuts taken during rapid prototyping. Each one was a lie to the compiler and a potential runtime bug. Hardening all 47 to proper types caught actual bugs and prevented new ones. In a system handling student grades under FERPA, a type error that silently coerces a score is not a minor issue.

RLS policies compound in complexity. The first 20 policies are straightforward. By policy 100, you’re dealing with cross-organization parent access (parents with children at different schools), security-definer function authorization, and regression risks where one migration accidentally drops guards from another. Four security audits caught issues that would have been data exposure incidents. Automated RLS testing — verifying that user A literally cannot query user B’s data — is the only way to maintain confidence at scale.

Build for handoff from day one. Echo will be maintained by people who are not software engineers. Every architecture decision was filtered through the question: “Can someone operate this via the Supabase and Vercel dashboards without touching code?” Monolith over microservices. Managed services over self-hosted. Clear documentation over clever abstractions.

What’s Next

Echo is live at casiana.ai. The immediate roadmap includes:

- AI-powered student feedback — Claude API integration with PII sanitization, providing formative feedback aligned to NTN’s five learning outcomes. Framed as “AI coach,” not “AI grader” — NTN’s pedagogy centers human assessment.

- Common Cartridge export — Standards-compliant content packaging with pedagogical metadata, enabling content portability between Echo and other platforms.

- InkWire integration — Connecting Echo’s activity creation to NTN’s existing AI-powered project design tools.

The competency-based education movement is accelerating — over 2,400 undergraduate CBE programs exist across 1,500+ institutions, and state leaders are actively exploring replacing seat-time requirements with competency demonstrations. Echo’s multi-outcome architecture is built for this future.

But the real measure is simpler: 22,000 students still have a platform. 63 schools didn’t have to compromise their pedagogical model. The work ships, and the students learn.